Markov Decision Processes and Reinforcement Learning

We study both theory and application of Markov decision processes. The theoretical pursuit has focused on the development of successive approximation algorithms and the dimension reduction of the functional space. In parallel, we also study machine learning techniques to tackle large scale dynamic optimization problem. In particular, we are tackling problems involving multiple decision makers using the reinforcement learning: multi-agent reinforcement learning.

Topics:

- New successive approximation algorithms for the Markov decision processes

- Sampling-based optimization for high-dimensional Markov decision processes

- Dimension reduction for Markov decision processes

- Reinforcement learning for multi-agent in collaborative and competitive environment

- Deep reinforcement learning for large scale dynamic optimization

Applications

- Dynamic Pricing of Multiple Product with Sales Milestones

- Infrastructure management with multiple HVAC systems

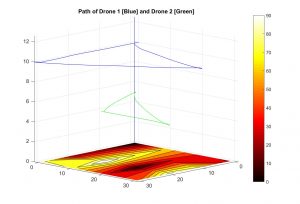

- Search and rescue optimization for a fleet of drones

- Bayesian control chart for multi-variate process

- Dynamic control of service systems

- Predictive physical asset management

|

Optimal paths for two drones with search and rescue missionThe drones are stationed in the upper corner where they can be charged. The drones are to be returned before the depletion of the battery to the station, while monitoring the area for search and rescue in a coordinated fashion. |

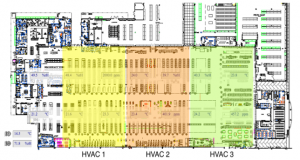

Reinforcement learning for building managementThree agents are controlling HVACs in a large retail store with an objective to minimize the total energy spending while maintaining a comfortable atmosphere in the building throughout the day. |

|

|

|

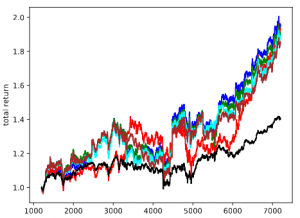

Deep Recurrent Reinforcement learning for Algorithmic TradingA deep recurrent neural network-based reinforcement learning algorithm is capable of making continuous control over multiple assets with an objective of maximizing the portfolio return with some financial constraints. |

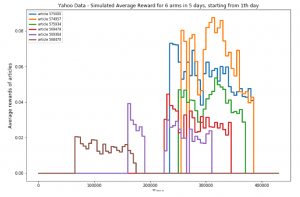

Non-stationary Multi-armed Bandit to Online RecommendationThompson sampling algorithm has been developed assuming piece-wise non-stationary bandits, and applied to a click-through rate maximization using data from Yahoo! |

|

Financial Engineering

We study financial engineering problems that involve stochastic asset dynamics. We have studied the pricing of non-trivial derivatives such as swing options, callable convertible bonds, developed statistical arbitrage algorithms such as optimal pairs trading, and risk-rationing models.

Topics:

- Analysis of first passage times

- Lattice models for multiple non-stationary underlying assets

- Finite difference method and its variants

Applications

- Optimal thresholds for pairs trading

- Valuation of real options with long maturity

- Valuation of game options

- Optimal risk rationing models for large investment companies

- Valuation of options with optimally managed portfolio

|

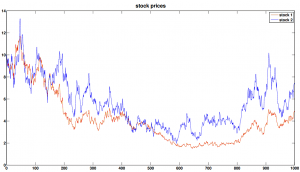

Optimal Pairs TradingTo the left, price processes of two correlated assets (Coke and Pepsi) are shown. An OU process is defined from the two processes, and the expectation of the first passage time, which is expressed as an infinite sum of polynomial terms, leads to the calculation of optimal trading policy. |

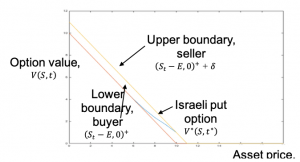

Robust Valuation of Israeli OptionsWe study the robust equilibrium of Dynkim games for the valuation of Israeli options. The well posedness of reflected backward stochastic differential equation with two obstacles has been shown under a class of non-dominating probability measures. |

|

Physical Asset Management

Physical asset management is often a large source of expense and plays a critical role in maximizing the productivity. We, via the Centre for Maintenance Optimization and Reliability Engineering, apply diverse combinations of statistical and machine learning approaches to predict upcoming failures, estimate the remaining useful life and optimize maintenance actions.

Topics:

- Proportional hazard model

- Predictive analytics using machine learning and statistical models

- Optimization of the expected long-run cost

Applications

- Data-driven digital twin

- Signal analysis and prediction

- Optimal MRO (Maintenance, Repair and Operations)

|

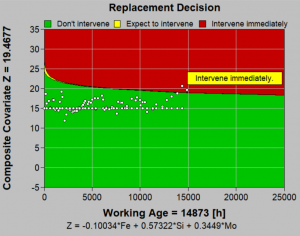

Optimization of MROShown to the right is a screen shot of EXAKT, a standalone solution developed in the Centre for Maintenance Optimization and Reliability Engineering, to recommend optimal preventive maintenance measures considering remaining useful life and economic factors. |